As artificial intelligence systems—especially large language models (LLMs)—become deeply integrated into enterprise workflows, the question is no longer just about model accuracy, but about alignment. How do we ensure that AI systems behave in ways that are helpful, safe, and aligned with human intent? This is where Reinforcement Learning from Human Feedback (RLHF) has emerged as a foundational technique.

At Annotera, we view RLHF not simply as a training method, but as a strategic layer that connects human intelligence with machine learning systems. In this article, we break down what RLHF is, how it works, and why it is critical for modern AI systems.

Understanding RLHF: A Human-Centric Approach to AI Training

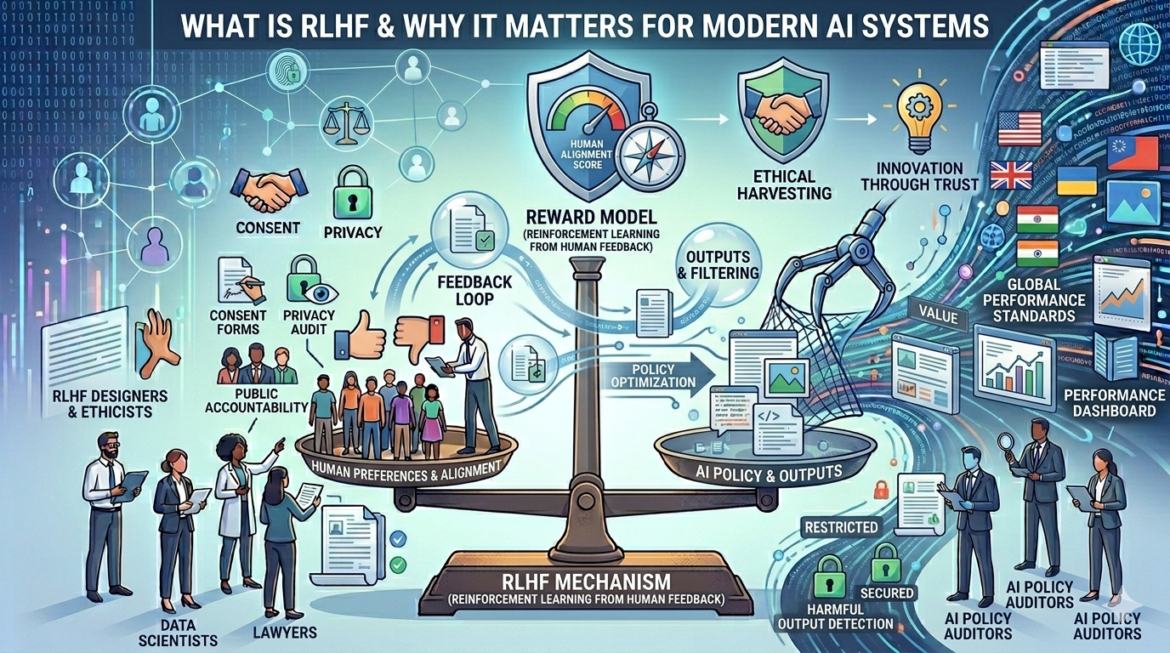

Reinforcement Learning from Human Feedback (RLHF) is a machine learning paradigm that uses human evaluations to guide model behavior. Instead of relying solely on predefined rules or static datasets, RLHF introduces human judgment into the training loop to optimize outputs.

In traditional reinforcement learning, models learn by maximizing a reward function. However, defining a perfect reward function for complex tasks—like natural language generation—is extremely difficult. RLHF addresses this limitation by replacing hard-coded reward signals with human preferences.

In practice, this means human annotators review model outputs and rank or rate them based on quality, relevance, safety, or accuracy. These evaluations are then used to train a “reward model,” which guides further optimization of the AI system.

How RLHF Works: A Step-by-Step Pipeline

RLHF is typically implemented through a multi-stage pipeline:

1. Supervised Fine-Tuning (SFT)

The process begins with a pre-trained language model. Human annotators create high-quality example responses to prompts, which are used to fine-tune the model in a supervised manner.

2. Reward Model Training

Next, human evaluators compare multiple outputs for the same prompt and rank them. These rankings are used to train a reward model that predicts which outputs are preferred.

3. Reinforcement Learning Optimization

Finally, reinforcement learning algorithms (commonly Proximal Policy Optimization) use the reward model to refine the AI system’s behavior, maximizing alignment with human preferences.

This iterative loop allows the model to continuously improve—not just in accuracy, but in usefulness and alignment.

Why RLHF Matters for Modern AI Systems

1. Aligning AI with Human Intent

One of the biggest challenges in AI is the “alignment problem”—ensuring that models behave according to human values. RLHF directly addresses this by embedding human judgment into the training process.

Rather than guessing what users want, the model learns from explicit human feedback, resulting in outputs that are more contextually appropriate and aligned with real-world expectations.

2. Improving User Experience and Trust

Modern AI systems are increasingly user-facing. Whether it’s chatbots, virtual assistants, or enterprise copilots, user trust is critical.

RLHF enhances:

- Response relevance

- Tone and clarity

- Safety and compliance

By optimizing for human satisfaction, RLHF enables AI systems to deliver more intuitive and reliable interactions.

3. Reducing Harmful or Biased Outputs

Raw LLMs trained on large-scale internet data can inherit biases or generate unsafe content. RLHF introduces a corrective mechanism.

Human reviewers can flag:

- Toxic or harmful responses

- Misinformation

- Biased or inappropriate outputs

This feedback helps steer models toward safer behavior, making RLHF essential for responsible AI deployment.

4. Enabling Continuous Improvement

Unlike static training pipelines, RLHF supports iterative refinement. As new use cases emerge, additional human feedback can be incorporated to improve performance.

This adaptability is particularly valuable in enterprise environments where requirements evolve rapidly.

The Role of High-Quality Training Data in RLHF

The effectiveness of RLHF is fundamentally tied to the quality of human feedback data. Poorly labeled or inconsistent feedback can degrade model performance or introduce new biases.

This is where the expertise of a specialized data annotation company becomes critical.

At Annotera, we emphasize that How High-Quality Training Data Impacts LLM Performance is not just a theoretical concept—it is a measurable driver of model success. High-quality annotations ensure:

- Consistent evaluation criteria

- Domain-specific accuracy

- Reduced noise in reward modeling

Organizations leveraging data annotation outsourcing gain access to scalable, high-quality human feedback pipelines that are essential for effective RLHF implementation.

RLHF Annotation Services: A Strategic Advantage

Implementing RLHF at scale is resource-intensive. It requires:

- Skilled human annotators

- Robust quality control mechanisms

- Domain expertise

- Scalable infrastructure

This is why many organizations turn to specialized RLHF Annotation Services providers like Annotera.

Our approach focuses on:

- Curating diverse and representative feedback datasets

- Ensuring annotation consistency through rigorous QA processes

- Leveraging domain experts for high-stakes use cases

- Scaling annotation workflows without compromising quality

By combining human intelligence with structured annotation pipelines, we enable organizations to unlock the full potential of RLHF.

Challenges and Limitations of RLHF

While RLHF is powerful, it is not without challenges:

1. Scalability Constraints

Human feedback is inherently expensive and time-consuming to collect, making large-scale implementation challenging.

2. Subjectivity in Feedback

Human preferences can vary, leading to inconsistencies in reward modeling.

3. Risk of Bias Amplification

If feedback is not diverse or representative, models may learn biased behaviors.

4. Over-Optimization

Models may “overfit” to specific feedback patterns, reducing generalization.

Addressing these challenges requires robust annotation strategies, diverse datasets, and continuous monitoring—areas where experienced annotation partners add significant value.

The Future of RLHF in AI Development

RLHF is rapidly evolving. Emerging approaches such as:

- Reinforcement Learning from AI Feedback (RLAIF)

- Direct Preference Optimization (DPO)

- Hybrid human-AI feedback loops

are pushing the boundaries of what RLHF can achieve.

However, the core principle remains unchanged: human input is indispensable for building AI systems that are not only intelligent but also aligned, safe, and trustworthy.

Conclusion

Reinforcement Learning from Human Feedback (RLHF) has become a cornerstone of modern AI development. By integrating human judgment into the training process, it bridges the gap between raw computational capability and real-world usability.

For organizations deploying AI at scale, RLHF is not optional—it is essential.

At Annotera, we help businesses operationalize RLHF through expert-driven annotation pipelines, scalable infrastructure, and a commitment to quality. Whether through data annotation outsourcing or specialized RLHF Annotation Services, our goal is to ensure that AI systems are not just powerful—but aligned with the people they serve.

In the evolving AI landscape, the future belongs to systems that understand humans—not just data. RLHF is the key to making that future a reality.